You’re right, I’ve responded to a few comments here and I thought it was another comment thread I was replying to.

You’re right, I’ve responded to a few comments here and I thought it was another comment thread I was replying to.

I don’t get why people who don’t like their content bother hating them.

Because for good or bad, they have a significant influence in the tech world. And since they are more bad, people don’t like them.

Take the Linux challenge, for example. They massively misrepresented the usability of Linux for the average person and for gamers. They even concluded at the end of their challenge that Linux was unsuitable for most gamers. And the release and success of the Steam Deck shortly afterwards was quite delicious.

Then there was the bit where Linus didn’t read the warning about the package manager removing the desktop environment and just hit yes, then complained that it wasn’t his fault and that the system was poorly designed.

The guy literally has an issue with accountability.

You’re upset they aren’t more knowledgeable as if everyone making tech content needs to know everything.

A better statement is that I’m upset because they preach their deep and unchallengeable knowledge and act as a be-all end-all authority in tech.

But really I’m not “upset” by them. I just really dislike them and think they’re insufferable.

And I don’t watch LTT. And there are plenty of other, and objectively better, channels about tech. And I watch those better channels, including GamersNexus.

All I’ll say is I’m willing to wait and see if they improve or if they make similar mistakes.

Their entire channel is a giant mistake. All of their content is garbage by virtue of their proven flawed and subpar provides. A process they admitted was flawed, and from what I’ve seen is still flawed with the garbage corrections in the comments nonsense they promised to fix.

They’re just going to go about business as usual and just be a little more careful with their public image. They don’t deserve the views they get.

And? No one said otherwise. The comment I was responding to made the argument that LLMs merely memorize content, which isn’t true.

Fine, you win, I misunderstood.

It’s not a competition, but I genuinely respect you for saying you misunderstood.

Once an LLM is trained, it is static and unchanging until you re-train it with new data and update the model.

Absolutely! I honestly think this is the main thing (or at least one of the main things) that prevent human-level intelligence or even sentience in LLM’s.

Think about how our minds work. From the moment we’re born (really, it’s way before that) our brains are bombarded with input and feedback from every sense. It takes a person many months of that to start recognizing things. That’s also why babies sleep so much, their brains are kinda “training” and growing fast. Organizing all the data into memories.

Side bar: this is actually what dreams are. Dreams are emotions, thoughts, ideas, or whatever concept a neuron or group of neurons are associated with getting triggered. When we dream it’s our brain taking the days inputs and building new connections. The neural connections in our brains are very much like weights and feed-forward process of neural activation is near identical to how artificial neural networks function. They aren’t called “artificial neural networks” for no reason.

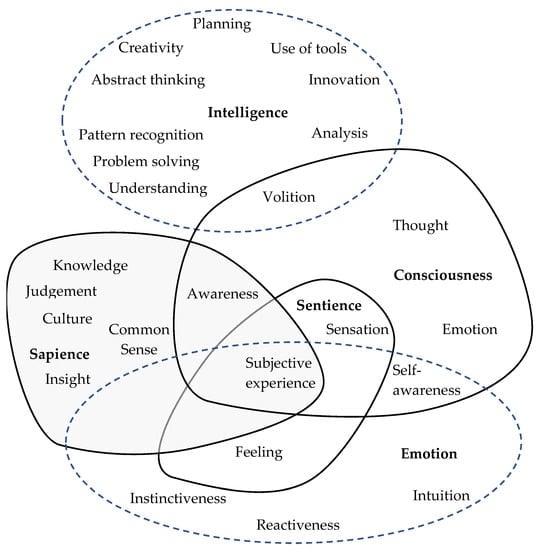

Here’s a useful graphic that shows things that make up “intelligence”

A very basic definition of intelligence is “the ability to solve problems or make decisions”.

I think the term is just often misused in common parlance so often that people start applying in a scientific setting incorrectly. Kinda how people used to call an entire computer the CPU, which like the word intelligence everyone understands what’s being said, but it’s factually wrong.

Same thing today when people say “I bought a new GPU” when they should say “I bought a new video card” as the GPU is just a component.

I don’t understand why LTT gets so much crap from people

Because their clowns. Literally. Their content is pure tech entertainment with constant immature humour and little substance. The way they present themselves is like a group of teenagers messing around.

Then there’s their “expertise”. They don’t know tech beyond a Windows “power user”.

But in regards to the more recent scandal, I really think a lot of those things are fixable and I’ll be watching to see if they fix them.

Linus showed his true colours during the Billet Labs incident. He doubled down hard, and I’m convinced that even today Linus feels like he did nothing wrong. They have zero reputation to salvage, IMO.

No, don’t give those clowns more money.

but I do enjoy LTT’s content overall.

I used to as well, up until the storage server video and their Linux challenge.

I lost every shred of respect and interest after Linus showed his true colours during the Billet Labs nonsense.

Cause we got a good glimpse into the kind of person Linus was when that whole thing started, by selling the prototype that wasnt his, then going out and lying about being in contact with the company, who he lied about forgiving him and making a deal to make up for it…

10’000%

This is what all his rabidly loyal fans miss. He showed true colours during this incident.

Doubled down?

Yes, doubled down. After being called out Linus made two separate long posts about why he wasn’t wrong.

They also formed a volunteer team of “beta tester” viewers who see each video pre-release

So using free labour instead of just doing their jobs? If they can’t “catch any mistakes internally”, then they’re just bad at their jobs (which they are).

I think they handled it well.

Yes, the PR team they used gave them a good corporate playbook to work with.

“Slowed the upload cadence” is just another way to say “wait for this to blow over”.

I used to watch LTT, mostly because it was interesting from the “let’s see what those guys have to say”. I had zero interest in their technical expertise because, well, they don’t really have any. They’ve always been clowns, but after their storage server video and their Linux “challenge” I lost all respect for any talent or knowledge they claimed to have. After the Billet Labs incident I lost any shred of respect I had for them.

They are clowns.

If you put a gazillion monkeys on a typewriter they can write Shakespeare.

This is a mathematical curiosity borne out of pure randomness. An LLM trained on a dataset to generate similar content is quite the opposite of randomness.

Get a load of this maroon, they think LLMs are actually sapient!

I guess reading comprehension is as bad here as it’s ever been on the internet.

I’m pretty sure LLMs have exactly reproduced copyrighted passages.

If I asked you to recite a popular poem, nursery rhyme, a song, or book passage there’s a good chance you could. Everyone can recite things word for word.

It’s the same with LLM’s, if they’re asked to generate, for example, an article written by the New York Post about a specific topic they really did write about, then it’s similar to asking someone to recite a poem or song.

Like fuck it is. An LLM “learns” by memorization and by breaking down training data into their component tokens, then calculating the weight between these tokens.

But this is, at a very basic fundamental level, how biological brains learn. It’s not the whole story, but it is a part of it.

there’s no actual intelligence, just really, really fancy fuzzy math.

You mean sapience or consciousness. Or you could say “human-level intelligence”. But LLM’s by definition have real “actual” intelligence, just not a lot of it.

Edit for the lowest common denominator: I’m suggesting a more accurate way of phrasing the sentence, such as “there’s no actual sapience” or “there’s no actual consciousness”. /end-edit

an LLM would learn “2+2 = 4” by ingesting tens or hundreds of thousands of instances of the string “2+2 = 4” and calculating a strong relationship between the tokens “2+2,” “=,” and “4,”

This isn’t true. At all. There are math specific benchmarks made by experts to specifically test the problem solving and domain specific capabilities of LLM’s. And you can be sure they aren’t “what’s 2 + 2?”

I’m not here to make any claims about the ethics or legality of the training. All I’m commenting on is the science behind LLM’s.

Bad argument.

It would hold water if their solution was proprietary and closed source. But it isn’t, and anyone else, literally anyone, can take Proton and use it in their project for profit.

Even if they closed shop tomorrow, or even just gave up work on Proton itself, we’d all still reap the benefits at no cost to us.

That is the point of E2EE. If anyone but the sender and receiver can see the messages then it’s not E2EE. This is the part that politicians and governments don’t understand (or just ignore). The idea that some designated authority can look at the messages when needed is entirely at odds with E2EE. It’s as valid as true = false or 2 + 2 = cat.

Epic has exclusivity on release

Wait, really? It’s officially off my list now. Screw those guys.

Find me another company that supports open source and Linux the way Valve does… I’ll wait

No digital game store is worth your loyalty.

When that store is run by a company that contributes massively to open source and works harder and puts more money into enabling alternate platforms for gaming than all other companies combined; ya, they have my loyalty.

I would love to see reasonable competition to steam which would give consumers and developers better options

No one’s going to compete with and outdo Steam with Linux support.

Yes, literally every single person on this planet can recite a song or poem.

But there are naturally massive differences between a human brain and an LLM. The point I was making is that an LLM doesn’t copy and store books and articles wholesale. The ability to reproduce samples from the dataset is more of a quirk than a feature, in the same way that a person can memorize things.